Hey there! If you’re a tech student or just diving into the world of IT, you’ve probably heard the name "Nginx" (pronounced "engine-x") whispered in server rooms or mentioned in your lectures. It sounds complex, but I promise, the core idea is pretty simple and super powerful.

Ever visited a website that just refused to load? You click, you wait, you get the spinning wheel of doom. It’s frustrating, right? Now, think about the massive sites you use every day—Netflix, Google, Instagram. They handle millions of users at the exact same time without breaking a sweat.

How do they do it? While there are many pieces to that puzzle, one of the biggest "secret weapons" is Nginx.

I remember when I first started, I thought, "It's just another web server, like Apache, right?" Well, yes... and no. It can be a web server, but that's like saying a smartphone is "just a device that makes calls." It does so much more.

In this guide, I'm going to break down what Nginx is, why it's different from the old-school tools, and how it became the go-to choice for building fast, scalable, and reliable websites. We'll cover everything from the basics to the cool tricks it has up its sleeve.

So, What is Nginx, Really?

At its heart, Nginx is a high-performance web server. It was created by a Russian engineer named "Igor Sysoev" back in 2004. His goal? To solve a massive problem called C10k.

The C10k problem was the challenge of a web server handling ten thousand (10k) connections at the same time. Back then, most servers would just crash and burn under that kind of load.

Nginx was built from the ground up to handle this exact problem.

The Super-Efficient Waiter Analogy

Think of a web server like a waiter in a restaurant.

The old-school way (like Apache, its main rival ) works like this:

A waiter takes your order, walks it to the kitchen, waits for the food to be cooked, picks it up, and then delivers it to your table. Only after you're all set does this waiter move on to the next table. If 100 tables sit down at once, you need 100 waiters, which is chaotic and uses a ton of resources.

This is called a process-per-connection model. It works, but it doesn't scale well.

Now, here’s how Nginx handles high traffic and concurrency:

Nginx is like one super-efficient waiter. This waiter takes your order, hands it to the kitchen, and immediately moves to the next table to take their order. And the next, and the next. As soon as a dish is ready, the waiter (who is already on the floor) grabs it and delivers it.

This is called an asynchronous, event-driven architecture. Nginx doesn't wait around. It manages hundreds of connections in a single process, just listening for "events" like "new connection," "data ready," or "connection closed."

This is why Nginx is incredibly lightweight and can handle a massive number of connections with very little memory.

Is Nginx Free?

Yes! Nginx is open-source and completely free to use. This is the version that powers millions of websites.

There is also a commercial version called Nginx Plus, which is now owned by a company called F5. It adds more enterprise-level features like advanced monitoring and support, but for most projects (and for learning), the open-source version is all you'll ever need.

The Big Showdown: Nginx vs. Apache

This is the classic debate. For a long time, Apache was the king of web servers. But Nginx has rapidly taken over, especially for high-traffic sites.

So, how does Nginx differ from Apache? We already covered the biggest difference: the connection handling (event-driven vs. process-driven).

But let's break down the Nginx vs. Apache performance comparison a bit more.

- Static Content: Nginx is insanely fast at serving static files (like HTML, CSS, images, and JavaScript). Because it doesn't have to "think" much, its event-driven model just streams the files out as fast as possible.

- Dynamic Content: This is where it gets interesting. Apache can handle dynamic content (like PHP or Python scripts) directly inside its own process. Nginx typically doesn't. Instead, it passes the request for dynamic content to a separate, dedicated service (like a PHP-FPM server) and then waits efficiently for the response. This sounds like an extra step, but it's a brilliant separation of concerns that keeps Nginx lean and fast.

- Configuration: This is a matter of opinion. Apache uses

.htaccessfiles, which let you change configurations in any directory. It's flexible but can slow things down. Nginx configuration is all done in one central file (or included files). It's cleaner and faster, but has a bit of a learning curve.

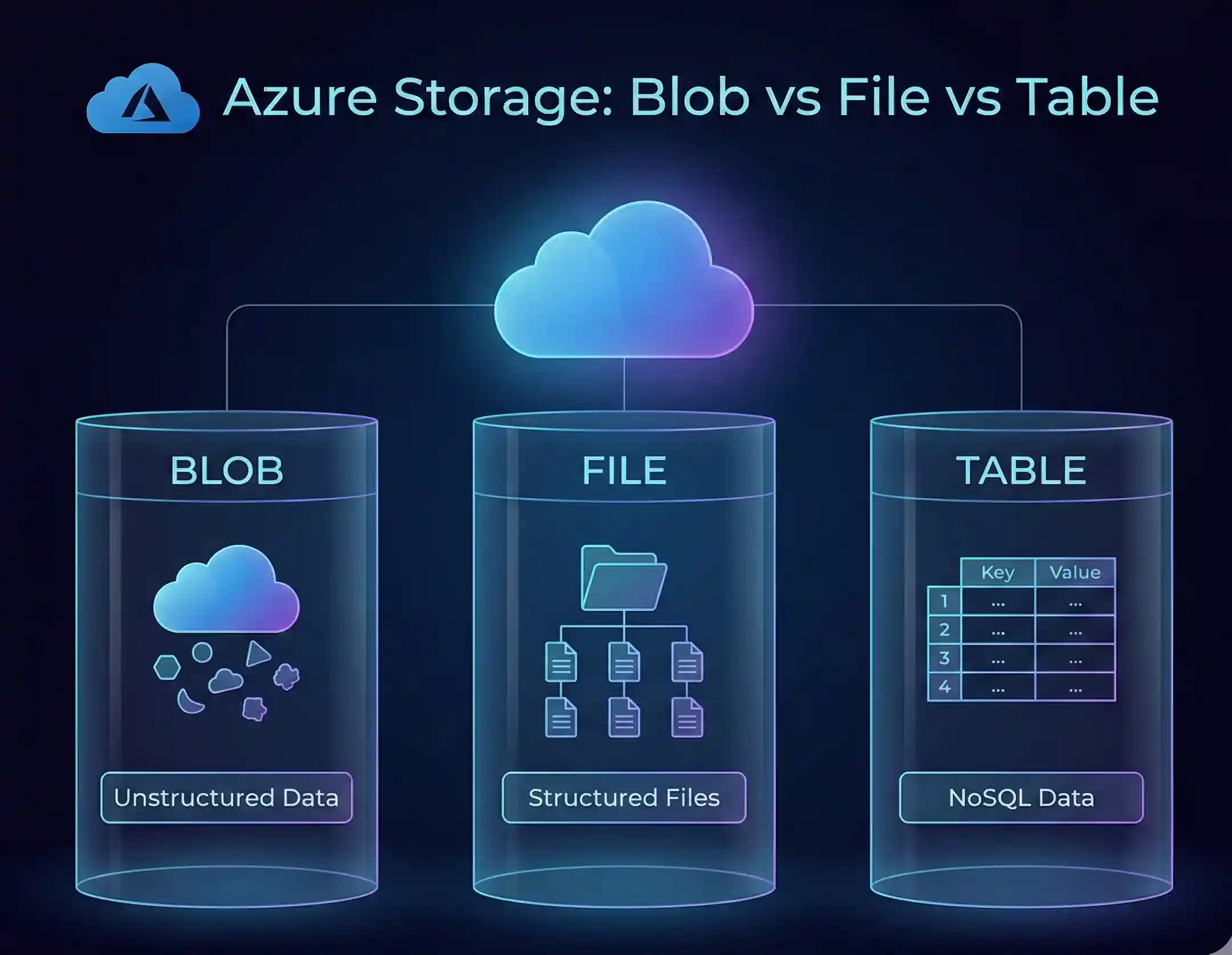

Here’s a simple comparison table:

| Feature | Nginx | Apache |

| Architecture | Event-Driven (Asynchronous) | Process-Driven (Synchronous) |

| Best For | High Concurrency, Static Content, Reverse Proxy | Flexibility, Shared Hosting, Dynamic Modules |

| Memory Use | Very Low | High (one process per connection) |

| Configuration | Centralized .conf files | Decentralized .htaccess files |

| Performance | Excellent for high traffic | Good, but can struggle under heavy load |

The bottom line: I'm not here to say one is "bad." But for modern applications, especially those built with containers or that need to scale, Nginx's architecture just makes more sense.

The Swiss Army Knife: Primary Use Cases of Nginx

Okay, so Nginx is a great web server. But that's just job #1. The real magic happens when you use its other features.

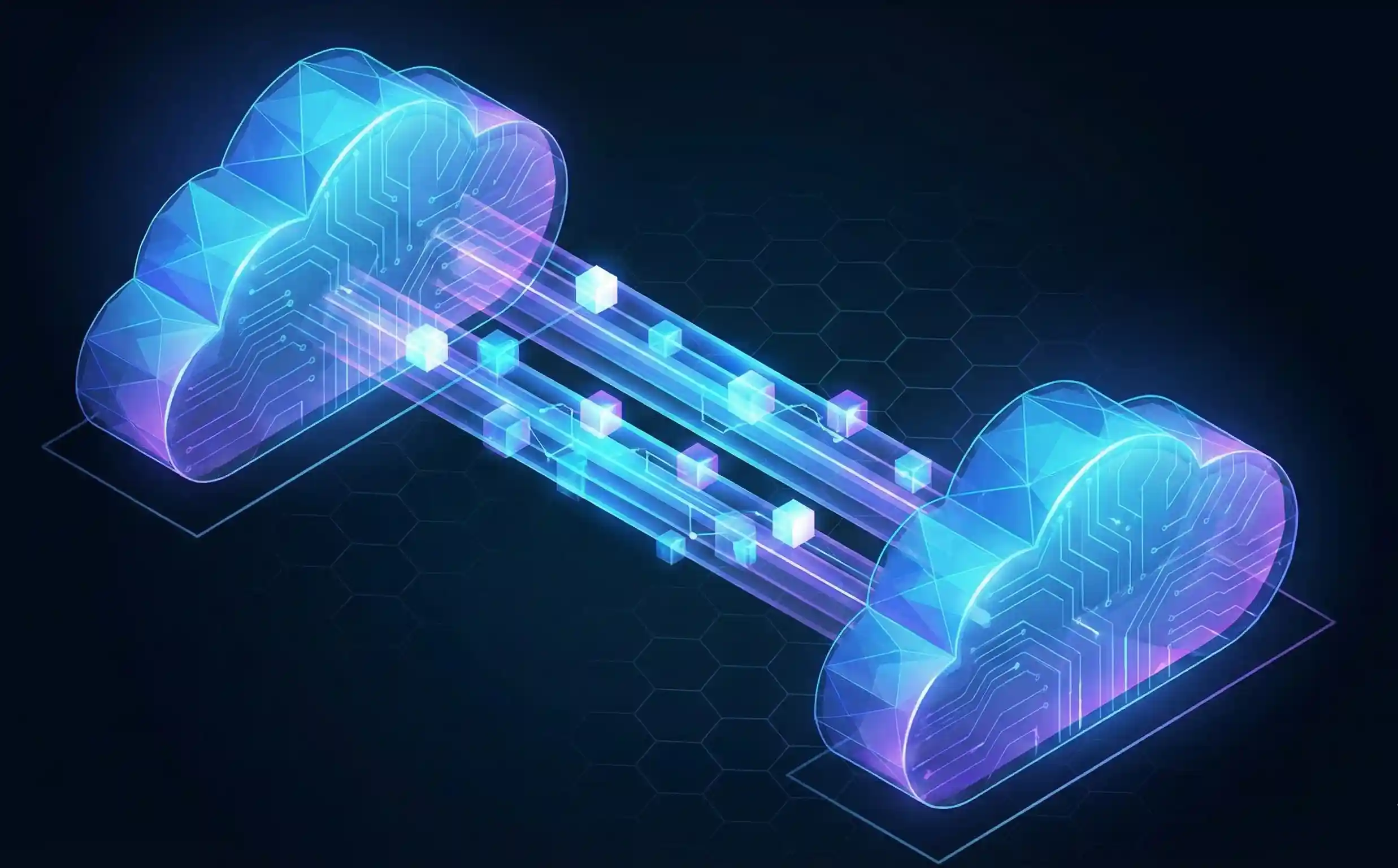

1. Nginx as a Reverse Proxy

This is probably its most popular use case.

So, what is a reverse proxy in Nginx?

- A forward proxy is what you might use at school or work. You (the client) use it to access the entire internet. It hides your identity.

- A reverse proxy is the opposite. It sits in front of the web servers. The entire internet talks to it to access your application. It hides the server's identity.

Imagine a big company's call center. You don't have the direct-dial number for every single employee. You just call one main number (the reverse proxy). The receptionist (Nginx) picks up, figures out who you need to talk to (which backend server), connects you, and passes the messages back and forth.

This is amazing for a few reasons:

- Security: Your backend servers (your "real" app servers) are hidden from the internet. Attackers can only see the Nginx proxy.

- Flexibility: You can have 10 different microservices running on 10 different ports, but to the outside world, it all just looks like one website:

your-cool-app.com. - Load Balancing: This leads us right to the next point...

2. Nginx as a Load Balancer

Because the Nginx reverse proxy is the single entry point, it can get smart about where it sends traffic.

Can Nginx be used for load balancing? Yes! It's one of its best features.

If you have one server, and it gets 100,000 visitors, it will probably crash.

If you have five servers, you can use Nginx as a load balancer to spread those 100,000 visitors out. It sends 20,000 to Server A, 20,000 to Server B, and so on.

If one of your servers crashes, Nginx is smart enough to see that and will stop sending traffic to it until it's healthy again. This is how sites achieve high availability.

3. Nginx Caching

This one is all about speed. Nginx caching is a way to make your website feel instant.

When a user requests your homepage, Nginx can "cache" (or save) a copy of that finished page. The next time someone asks for the homepage, Nginx doesn't even bother talking to your backend server. It just serves the saved copy instantly.

This dramatically reduces the load on your server and makes for faster websites.

Getting Your Hands Dirty: A Peek at Nginx Configuration

Alright, let's look under the hood. How do I configure an Nginx server?

Nginx is controlled by plain text files, usually found in /etc/nginx/nginx.conf or /etc/nginx/sites-available/. The syntax is clean and logical. It's all based on directives (commands) and blocks (groups of commands).

Here’s what a super-simple setup for a reverse proxy might look like:

Nginx

# This block handles all web traffic

http {

# This 'upstream' block defines our group of backend servers

upstream my_app_servers {

server 10.0.0.1;

server 10.0.0.2;

server 10.0.0.3;

}

# This 'server' block defines one website

server {

listen 80; # Listen for traffic on port 80 (standard HTTP)

# This 'location' block says "for any request..."

location / {

# "...pass it to our group of app servers"

proxy_pass http://my_app_servers;

}

}

}

See? It's not that scary. You're basically just writing rules for how traffic should flow.

This one simple file is where you'd handle all the powerful stuff we've talked about.

How to Enable SSL/HTTPS in Nginx

In 2026, if your site isn't using HTTPS, you're doing it wrong. Setting up SSL on an Nginx server is a must.

Back in the day, this was a huge pain. Now? It's incredibly easy, thanks to a free service called Let's Encrypt. You use a tool called "Certbot," and it will automatically get your SSL certificate and even edit your Nginx config file for you.

Your Nginx SSL setup block will end up looking something like this:

Nginx

server {

listen 443 ssl; # Listen on port 443 (standard HTTPS)

server_name your-cool-app.com;

ssl_certificate /path/to/your/fullchain.pem;

ssl_certificate_key /path/to/your/privkey.pem;

# ... all your other settings ...

}

Quick Tips for Nginx Performance Tuning

Once you're set up, you can add a few simple lines to your config for a major speed boost.

- Enable Gzip Compression: This tells Nginx to "zip" your files (like HTML, CSS, JS) before sending them to the user. The user's browser unzips them instantly. This dramatically reduces file sizes.gzip on;

- Enable HTTP/2: This is a newer version of the web protocol that allows browsers to download many files at once over a single connection. It's a massive performance win.listen 443 ssl http2;

- Set Caching Headers: You can tell the user's browser how long to cache files, so they don't even have to ask for them again.

A Pro-Tip: Reloading Nginx Without Downtime

Here’s a tip that will make you look like a pro. Let's say you just edited your nginx.conf file. You need to apply the changes.

- The Rookie Move:

sudo service nginx restartThis stops Nginx and then starts it again. It's fast, but for that 1-2 second window, you will drop any active connections. Not good. - The Pro Move:

sudo nginx -s reloadThis command tells Nginx to gracefully reload its configuration without dropping any existing connections. It's a zero-downtime reload.

Nginx in the Modern Tech World

Nginx isn't just for old-school virtual servers. It's more relevant than ever.

- Using Nginx with Docker: This is a super common pattern. You'll run your Python, Node.js, or Java app inside a Docker container. But you'll also run an Nginx container right next to it. The Nginx container acts as the reverse proxy, handling all the incoming traffic, serving static files, and passing the dynamic requests to your app container.

- Kubernetes (K8s): In the world of K8s, Nginx is the driving force behind the "Ingress Controller." It's the smart-router that figures out which service inside your cluster a request is supposed to go to.

- Troubleshooting & Monitoring: When things go wrong, your first stop will be the Nginx logs (

access.loganderror.log) . You can also monitor Nginx server performance using a built-in module calledstub_statusthat gives you a quick snapshot of active connections.

So, What's the Big Takeaway?

If you're a tech student, Nginx is one of those tools you just need to know.

It's not just a web server. It's a high-performance traffic controller, a security guard, a load balancer, and a caching powerhouse all rolled into one. It’s the lightweight, efficient engine behind the modern web.

Understanding what Nginx is and why it's so dominant is a key piece of the puzzle for understanding how to build and scale applications.

My advice? Don't just read about it. Go get a cheap $5/month cloud server (or just use Docker on your laptop) and try to set it up. Host a simple static site. Then, try to set it up as a reverse proxy for a simple "Hello World" app. Getting your hands dirty is the fastest way to learn.

Further Learning & Official Resources

Don't just take my word for it. The best place to learn is from the source. They have tons of great documentation. (This is the "certification link" you asked for—the official docs are better than any third-party cert!)

- Nginx Official Documentation: Nginx.org Docs

- Nginx Beginner’s Guide: Nginx.com Beginner's Guide

- Let's Encrypt (for free SSL): LetsEncrypt.org

Got a question or your own favorite Nginx trick? Drop it in the comments. I'd love to hear what you're building!

No comments yet. Be the first to share your thoughts!